Configuring File Indexing

To search data in the Live Location or Storage Location included in

In the IPRO Admin UI, you can set up and run a job for file indexing only, or configure a job for file archiving and simultaneous file indexing. For more information, see Configuring File Archiving.

-

If you previously configured a Direct From Source type of File Archiving job, you do not need to set up and run a separate File Indexing job to search the archived data.

-

If you intend to configure an Index Search Query type of File Archiving job, you must first set up and run a File Indexing job. With File Indexing configured, you can search archived data and perform policy based file archiving in the indexed location.

To create a File Indexing job in the IPRO Admin UI, see steps below.

A File Index job is also called a crawler job. You must create a crawler job for each location whose files you want to index.

- Log into the IPRO Admin UI.

- Go to Archiving > Agents > File Indexing.

- At the bottom-left of the License tab, click Create.

- Enter a meaningful name for the job.

- The newly created job appears in the navigation tree under File Indexing. Click on it.

- Navigate to the Criteria tab to begin configuration tasks. Certain default settings are preselected.

- In the Data Source section, select the location you want the crawler to crawl.

-

NOTE

If you previously configured the DFSRoot connector, pointing to the DFS root location, and intend to select this as the Data Source—see Configuring Indexing for the DFS Root. - In the Indexer section, select one of the following:

- lncremental: Indexes only new data since the last index update. This is an efficient option if your users need the latest data or if there is a constant flow of new data being created in the location.

- Recreate: Rereads the entire contents of the location and indexes it. This will require some time depending on the size of the location.

- In the Options section, you can specify the (sub)folders to be indexed. You can do this through the process of inclusion or exclusion. Select one of the following:

- Extract files from all folders: Does not restrict the scope of the crawling job to specific (sub)folders.

- Include only the following folders: Restricts the scope of the crawling job by including only the specified (sub)folders. Enable Recursive to apply the inclusion policy to the subfolders of a specified folder.

- Process all folders except the following: Restricts the scope of the crawling job by excluding the specified (sub)folders. Enable Recursive to apply the exclusion policy to the subfolders of a specified folder.

- Click Save. The job specifications are now configured.

- Select the Job Settings tab.

- In the Description field, enter a description for the index job. This is helpful if there are multiple jobs of the same type.

- In the Job Priority section, set a priority, choosing from Low, Normal, and High. Normal is the default priority.

-

NOTE

This setting is useful in scenarios where multiple jobs are running concurrently and you want to control which job takes priority with respect to thread allocation. The job priority you select determines which job in the queue is selected next by the JobManager process, which is responsible for allocating queued job threads to available thread slots on the archive nodes. Prioritization is categorized according to user account. For example, you may want to assign higher priority to crawling files created by VIP users. If a normal priority job is already running and using all available job threads, setting the priority to high and executing it will direct any freed threads to be used on the new high-priority job. This feature works in conjunction with load balancing in order to control crawling job distribution. - In the Mode section, select the mode in which the index will operate:

- Continuous: Indexes operate on a continual basis without interruption. The index keeps up to date with all changes in the location.

- Pause: Stops indexing. Once the initial index is built, there is no need to continue running it.

- Pause and delete indexes: Stops indexing and deletes the existing index.

-

Click Save at the bottom-left of the screen.

The job will run according to the schedule set. Alternatively, you can click Run Now to start the job immediately. - (Optional) Open the Log Settings tab. Logging provides detailed job logs for troubleshooting purposes. Choose from the following log settings:

- Disable detailed logging (default setting)

- Enable logging

- Enable logging only for the next run

- You can also have email Notifications sent at the completion of a job, along with attachment options.

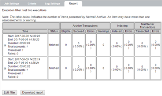

For more information about logging—see Configuring Logging.- (Optional) In the Report tab, check on the progress of the job.

For more information about reviewing reports—see Viewing Reports.